Transience

Evoking emotion and curiosity with abstract visuals

Duration

Role

Methods

7 Weeks

Interaction Designer, Prototyper (team of 3)

Interaction Design, Mechatronics, Coding

Background

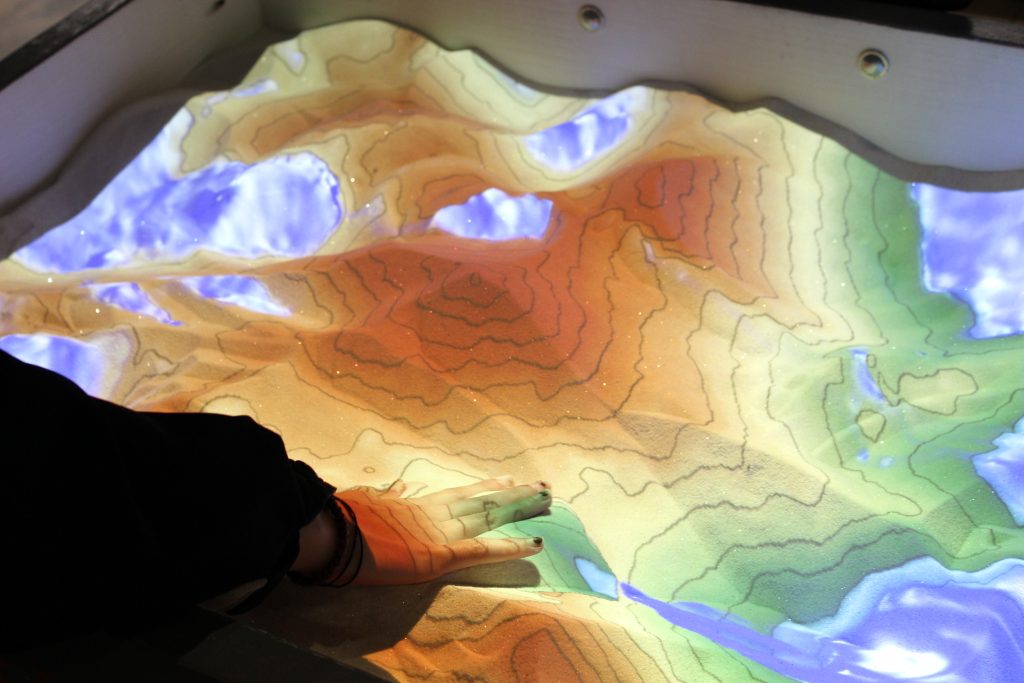

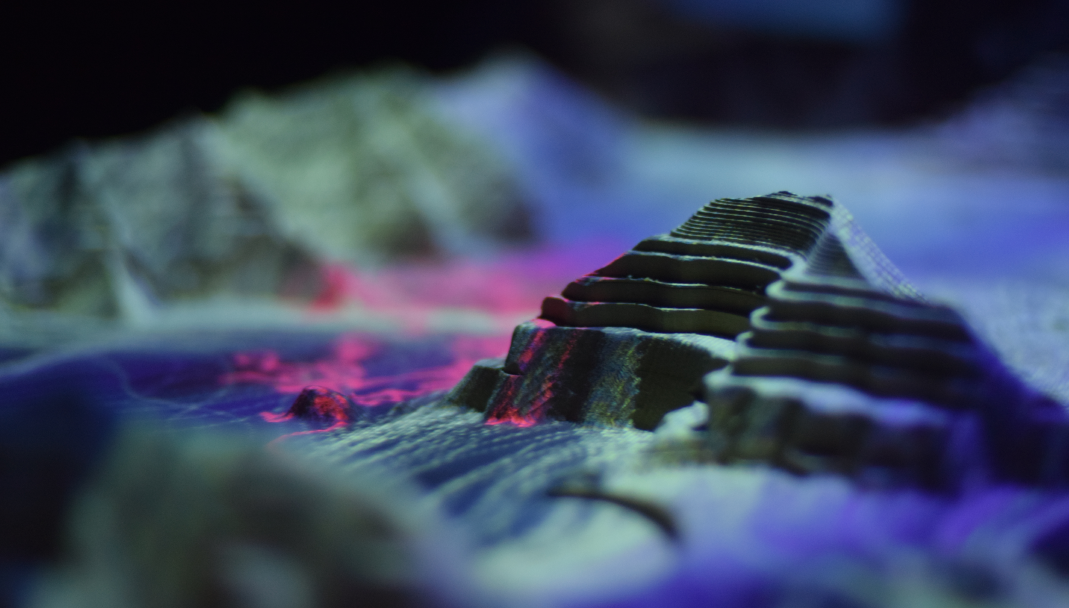

Transience is an interactive exhibition piece that invites users to explore how their proximity and presence warps their perception of a tangible landscape. This is achieved through the combined use of sensors, projection, and abstract modeling.

Ideation

Our original concept was to create an interactive piece that educated users on the negative consequences of improper human land use on the environment. We were interested in specific, region-based disasters, such as California's growing wildfire challenges or the immediate effects of oil spills. Hence, our original approach was focused on replication on a smaller scale with a sense of realism. However, following further deliberation and feedback, we decided to pivot towards a more generalized approach.

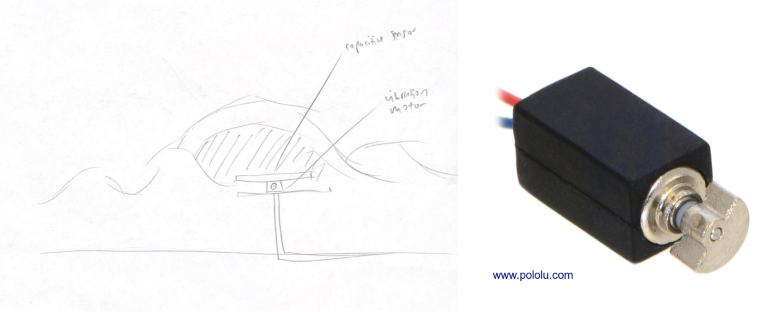

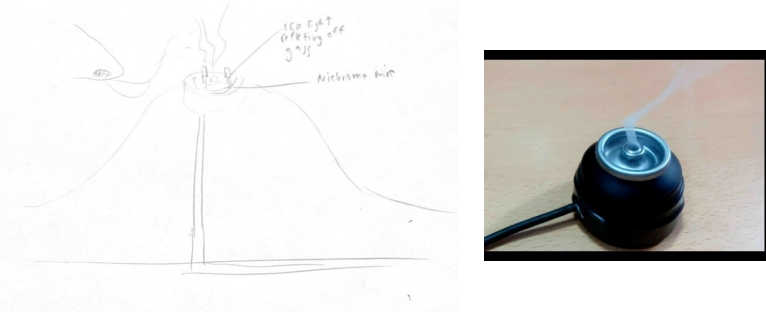

Interaction Sketches

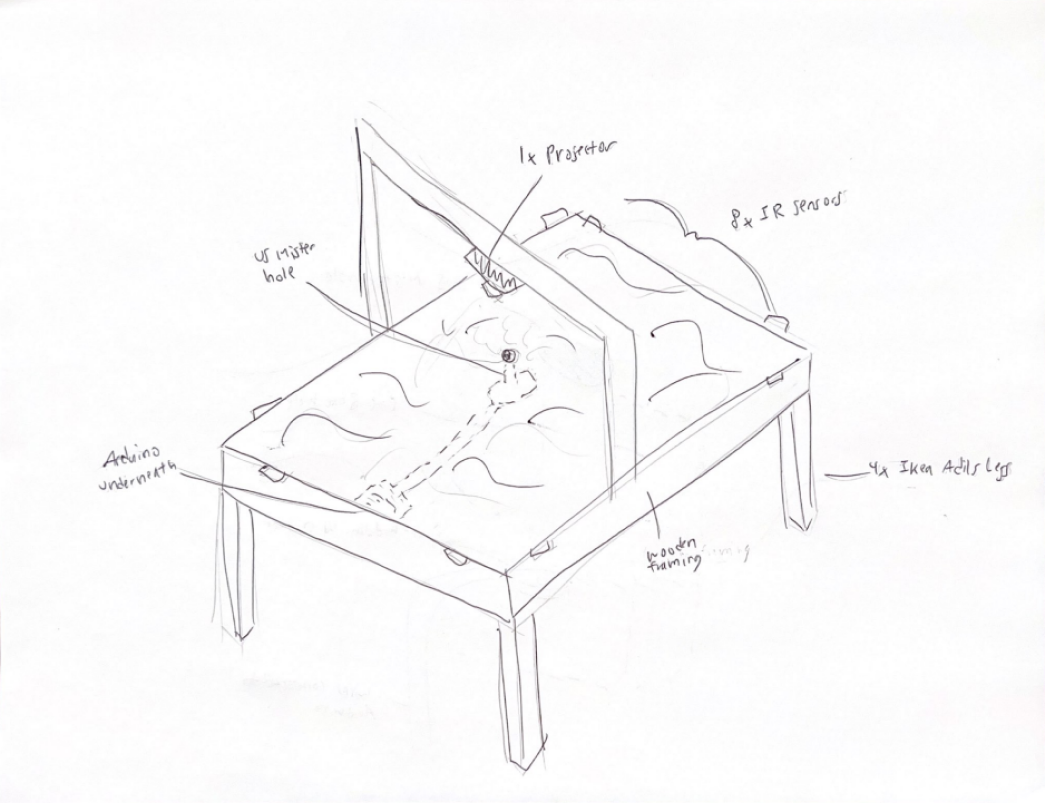

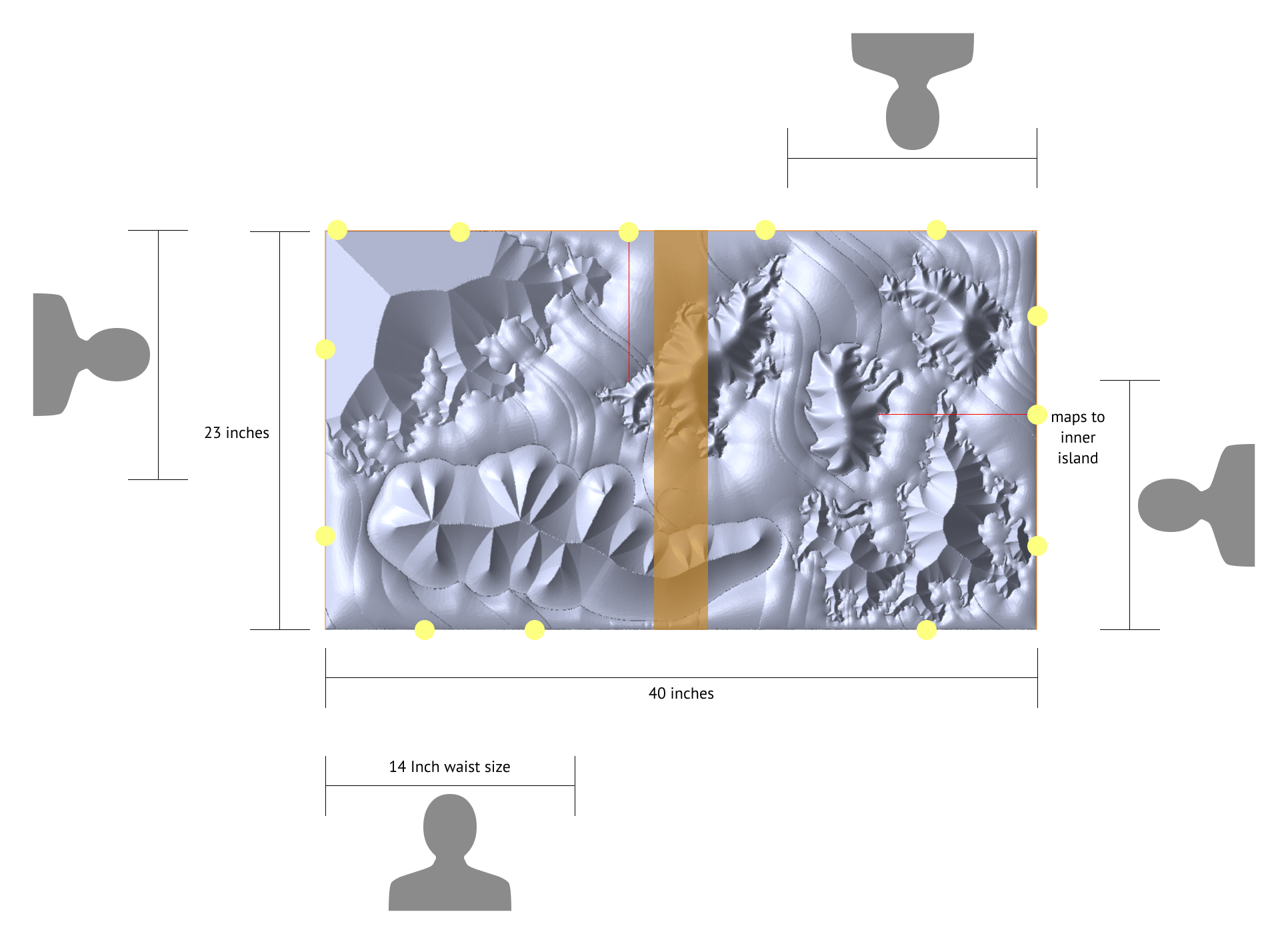

Rather than guide the user through a predetermined experience, we wanted to encourage users to explore different combinations of interactions and generate impressions of their own. As a result, we aimed to build an interactive and tangible landscape with organically shaped regions, each representing different human experiences and emotions. These experiences would be evoked by iterating through a deliberate mixture of texture, color, shape, and sound through different embedded sensors and mechanisms.

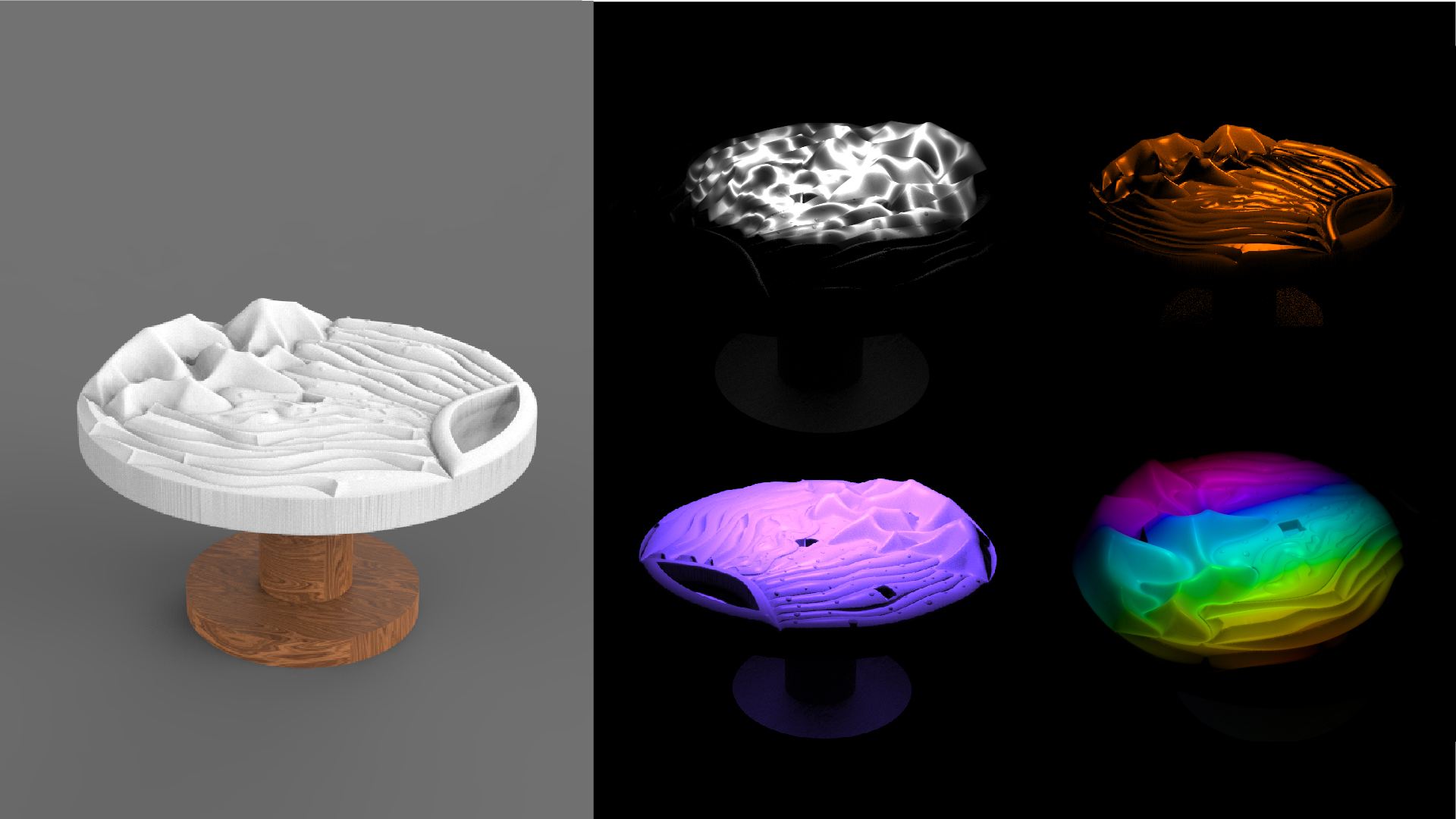

Material Exploration

After initial model renderings, we tested the look and feel of different materials like foam, wood, and plastic under a projector. We found that 3D surfaces helped create dynamic shadows as projections passed over, and that wood simulated the most natural, dense feeling.

Prototyping

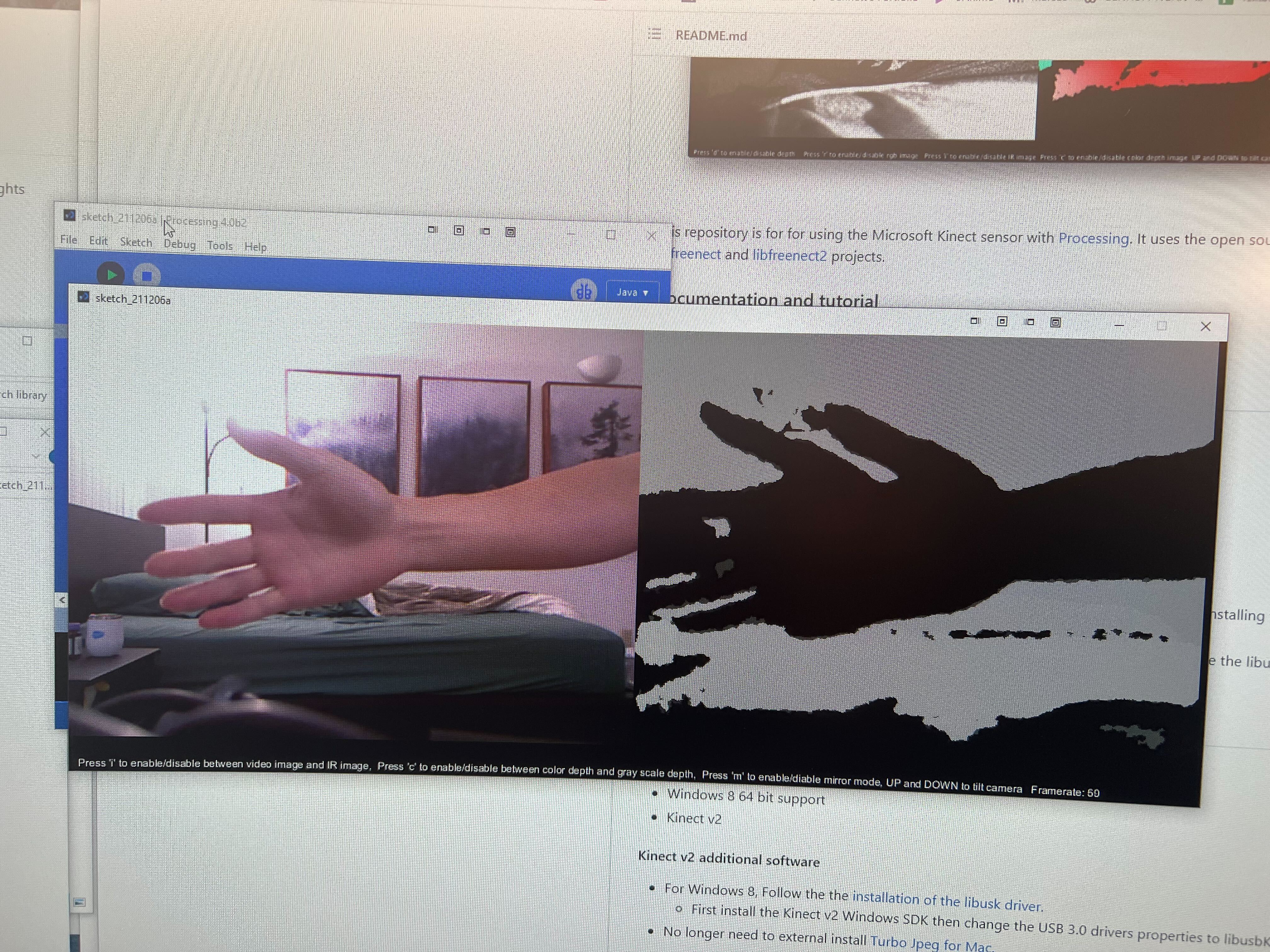

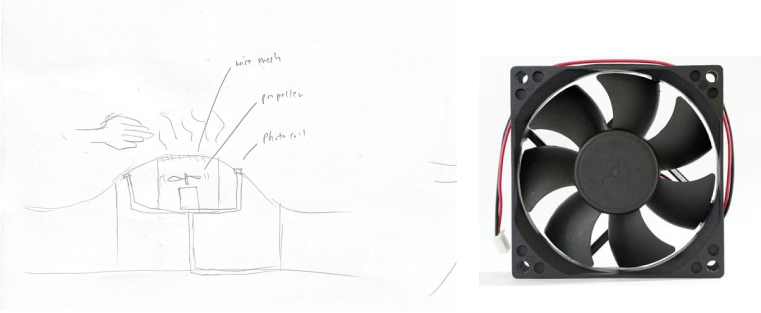

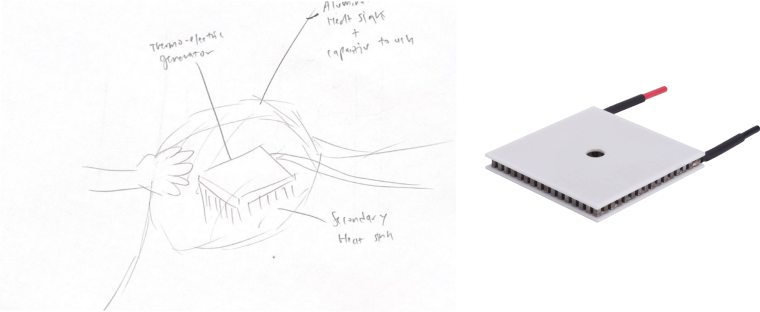

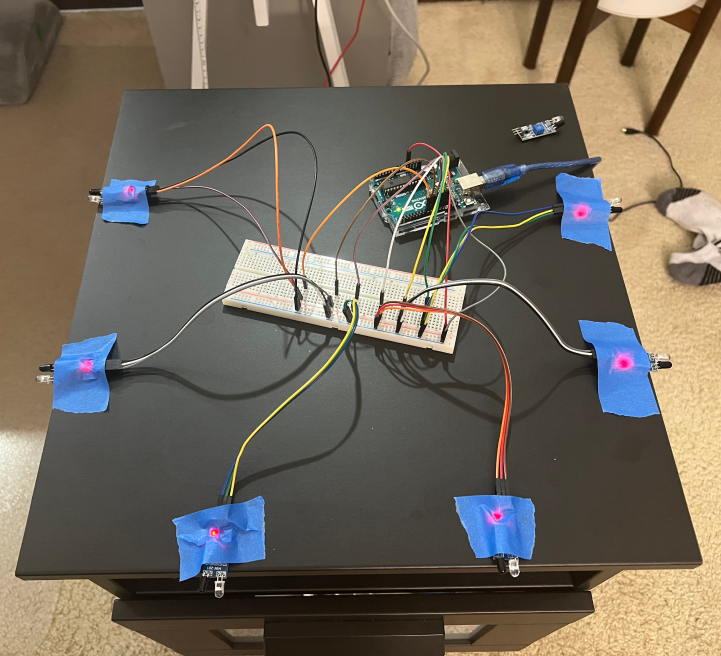

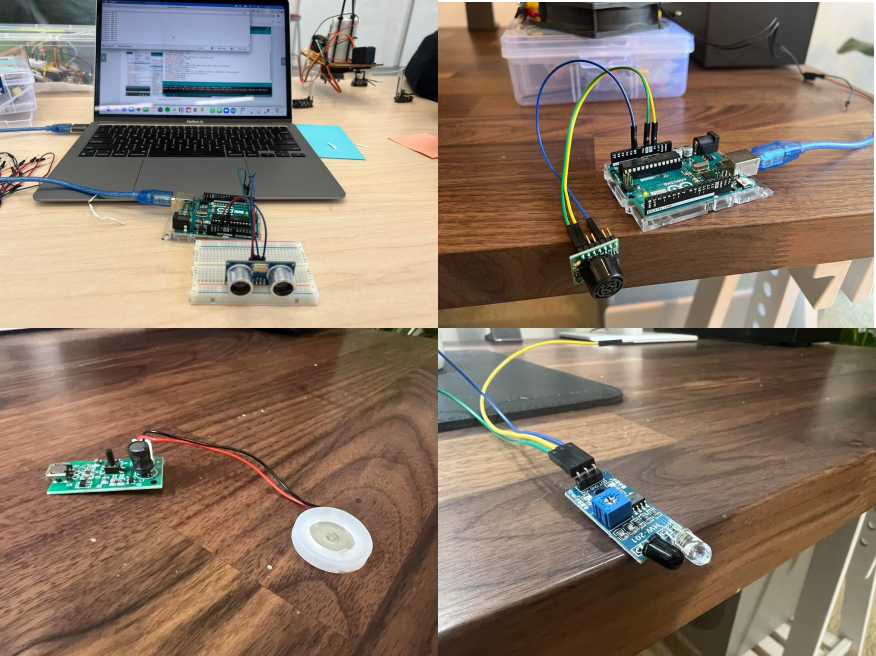

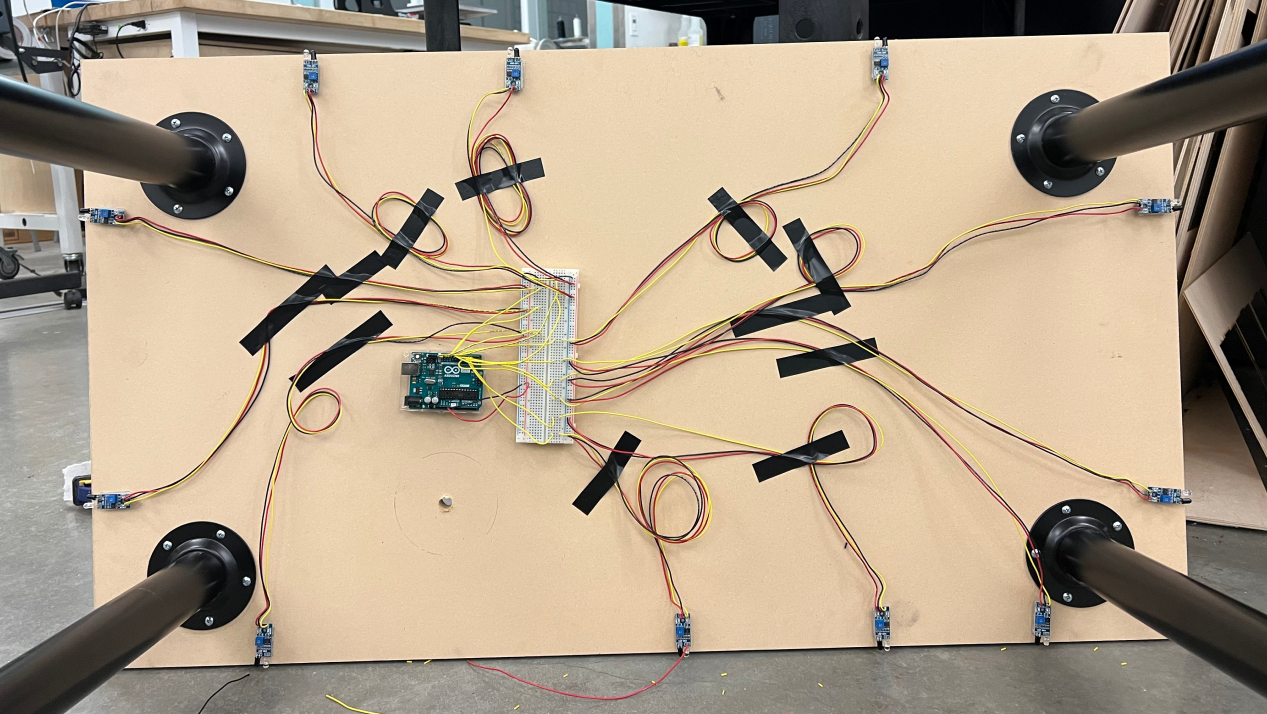

I tested multiple sensors for their feasibility to be implemented into a CNC table piece. For greatest impact, we decided to revolve the project around a simple core interaction rather than attempt at multiple haphazard applications. Of these sensors and mechanisms, I chose two, the IR sensor and ultrasonic mister, to focus on building out for our prototype.

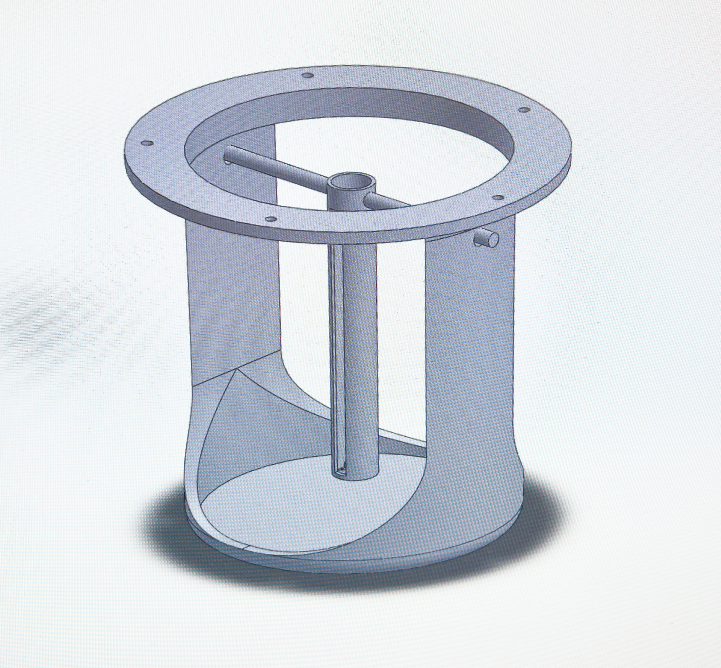

Fabrication

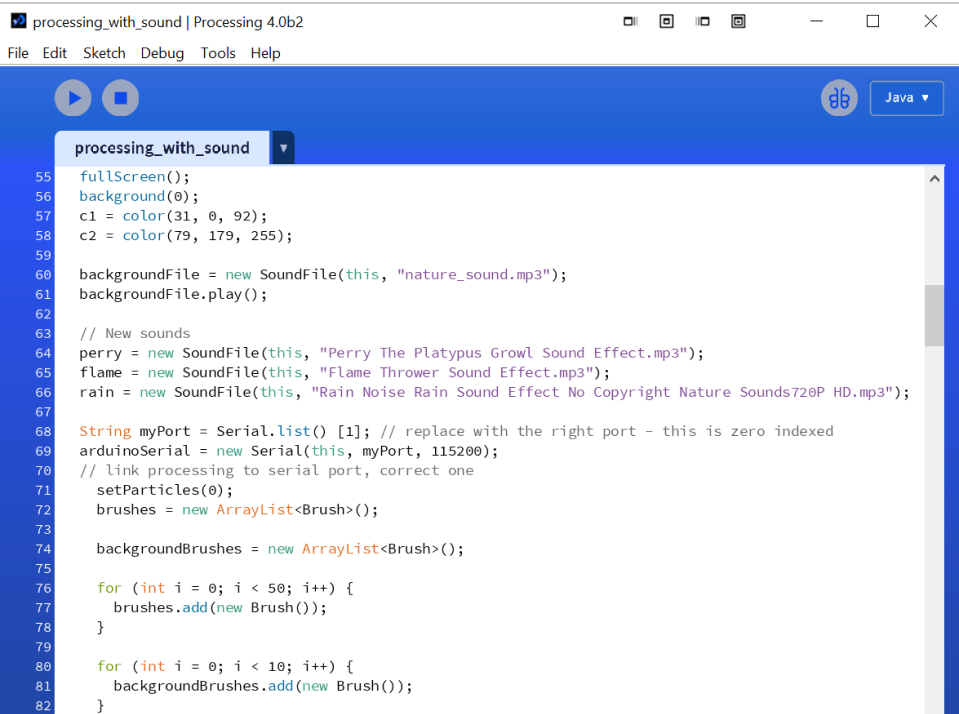

Iterations of components were modeled in SolidWorks and 3D printed, and sensors were wired to an Arduino that sent serial data for further interaction coding in Processing. The table was modeled in Rhino and fabricated over 40+ hours on a Shopbot PRSalpha CNC router.

Future Work

In the future, we intend to expand on the project by refining the visual interaction to complement the physical piece. In its current form, the CNC shapes act as a static representation of land; we want to use the different sensors and projections to add a dynamic element to the land - perhaps the location of the user’s hand on the piece could trigger radially expanding particle effects, or that depth mapping could be used to accentuate the beautiful shapes carved out by the CNC rather than mask over it.