Solar Turbines

Establishing clarity for users in a Contact Us form

Duration

Role

Team

Methods

11 Weeks

UX Design Intern

Product Manager, Business Analyst, UI Designer

Experience Design, Information Architecture, User Research, AI Prototyping

Project Overview

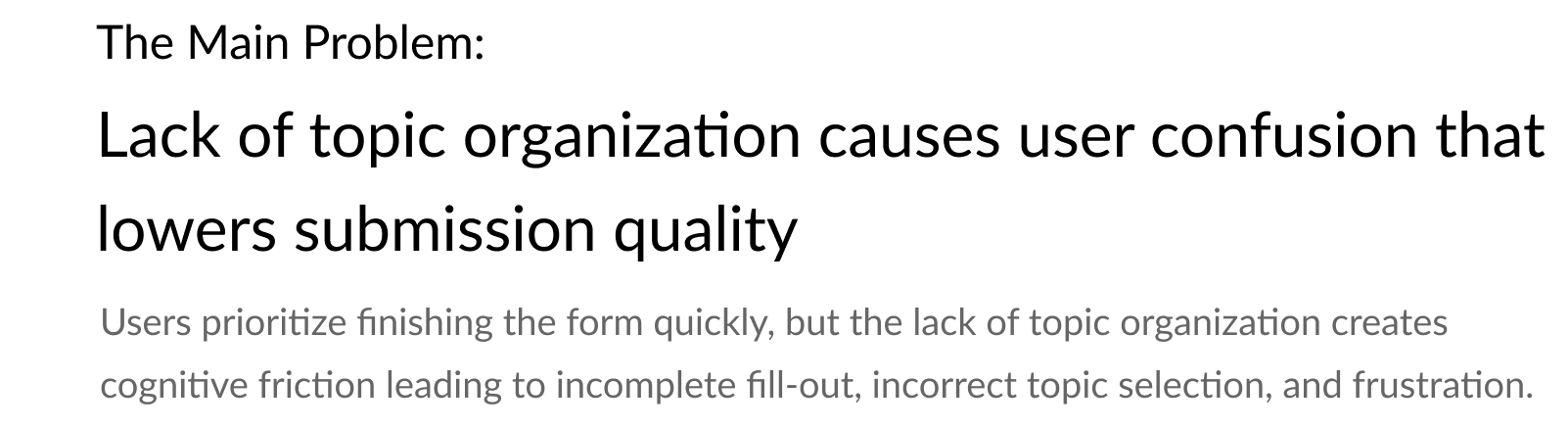

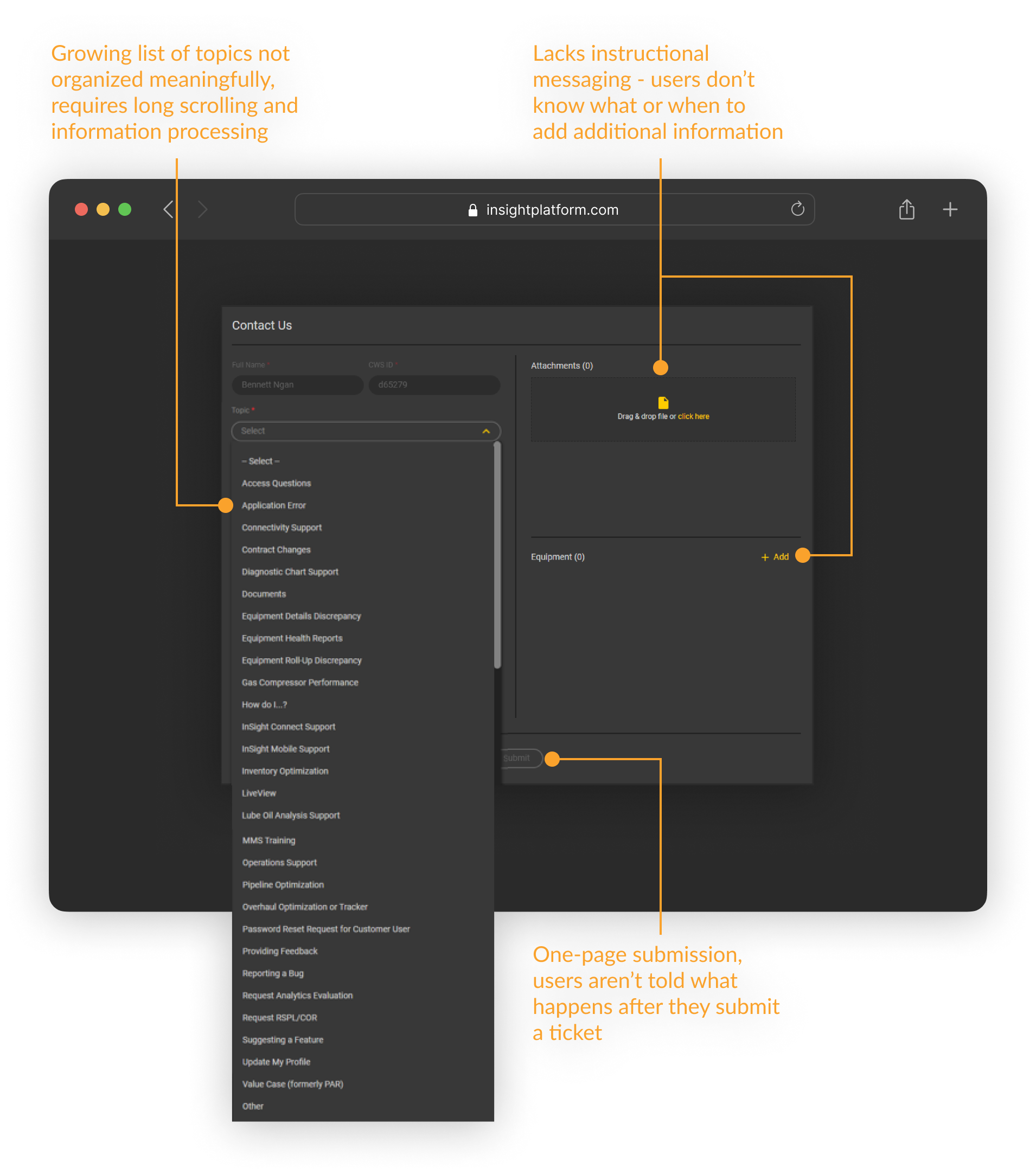

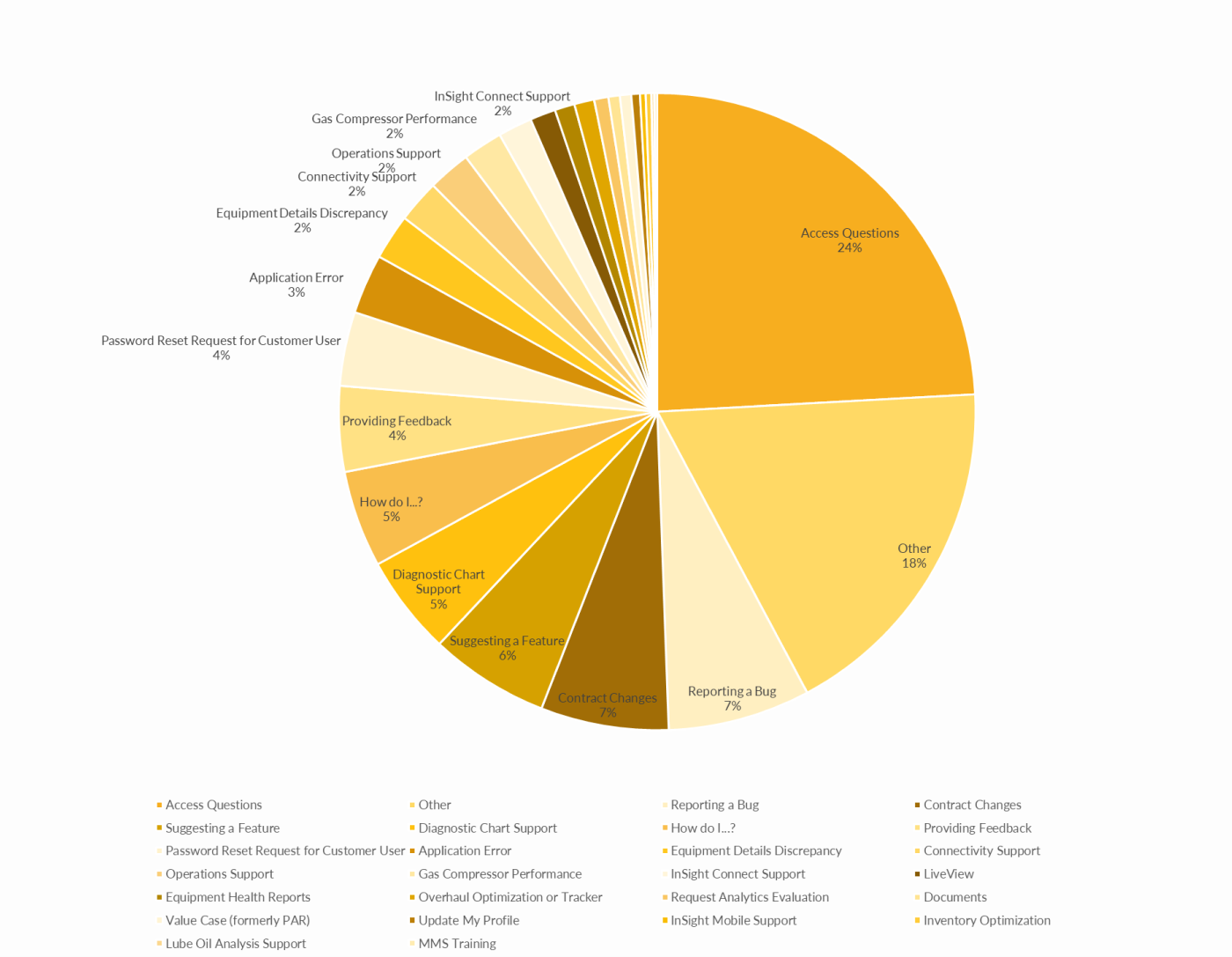

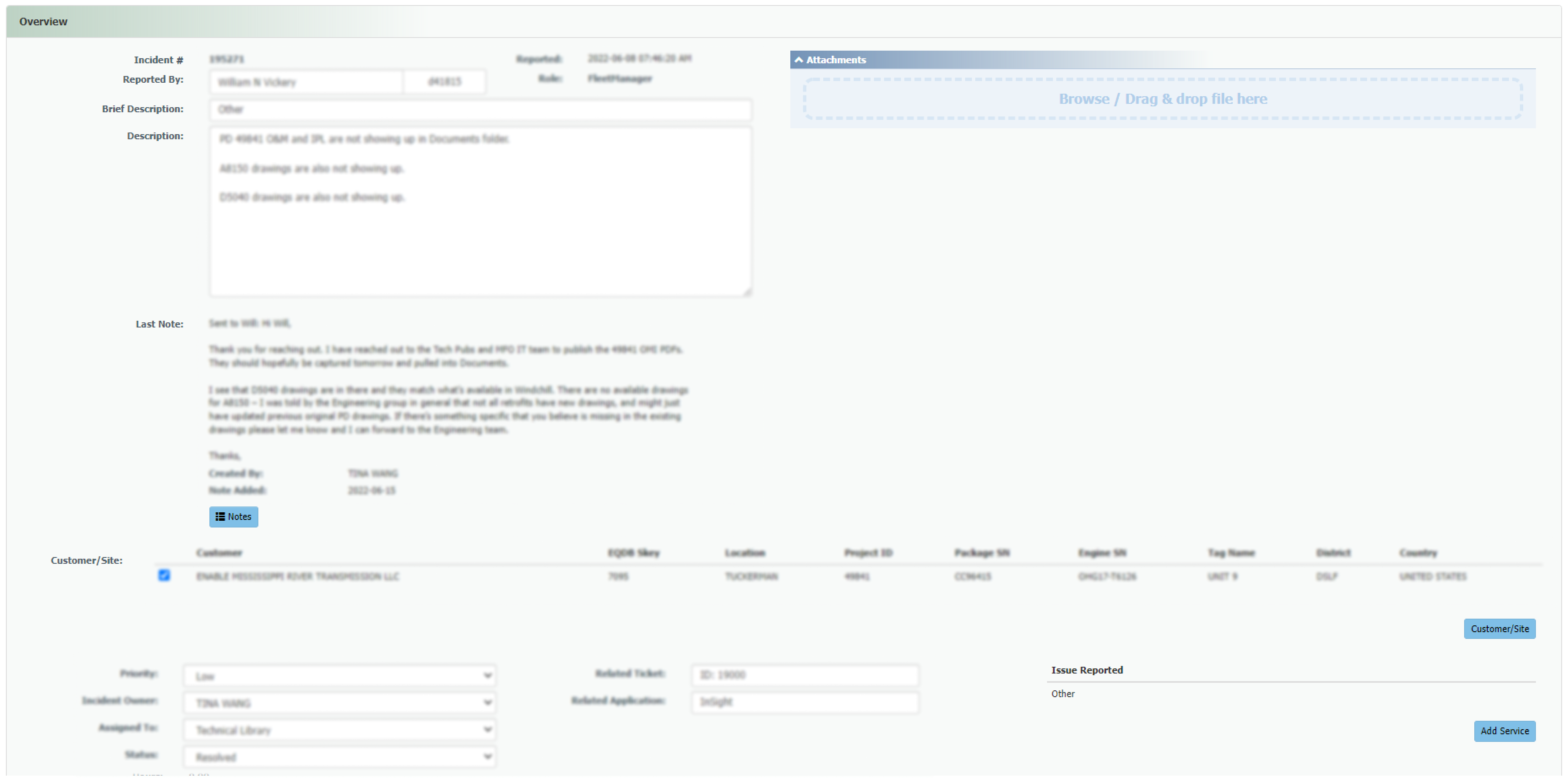

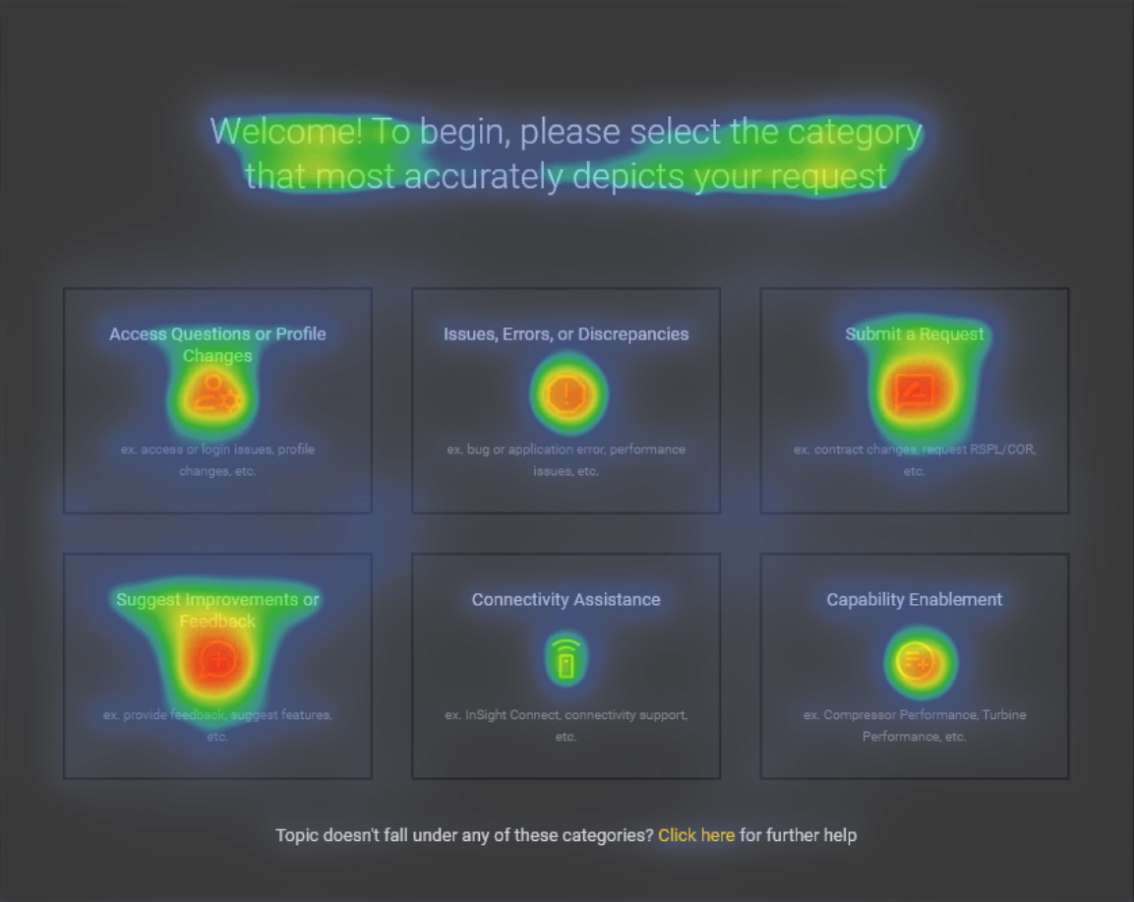

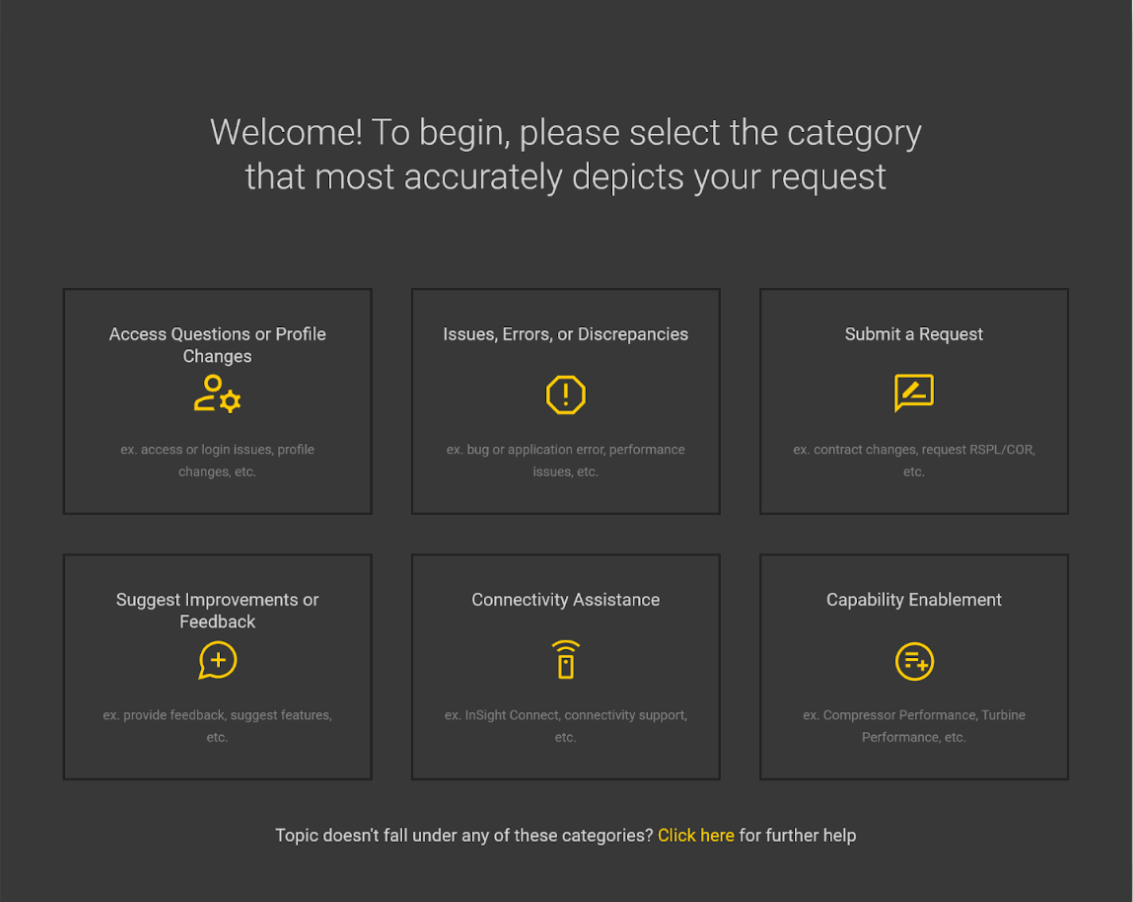

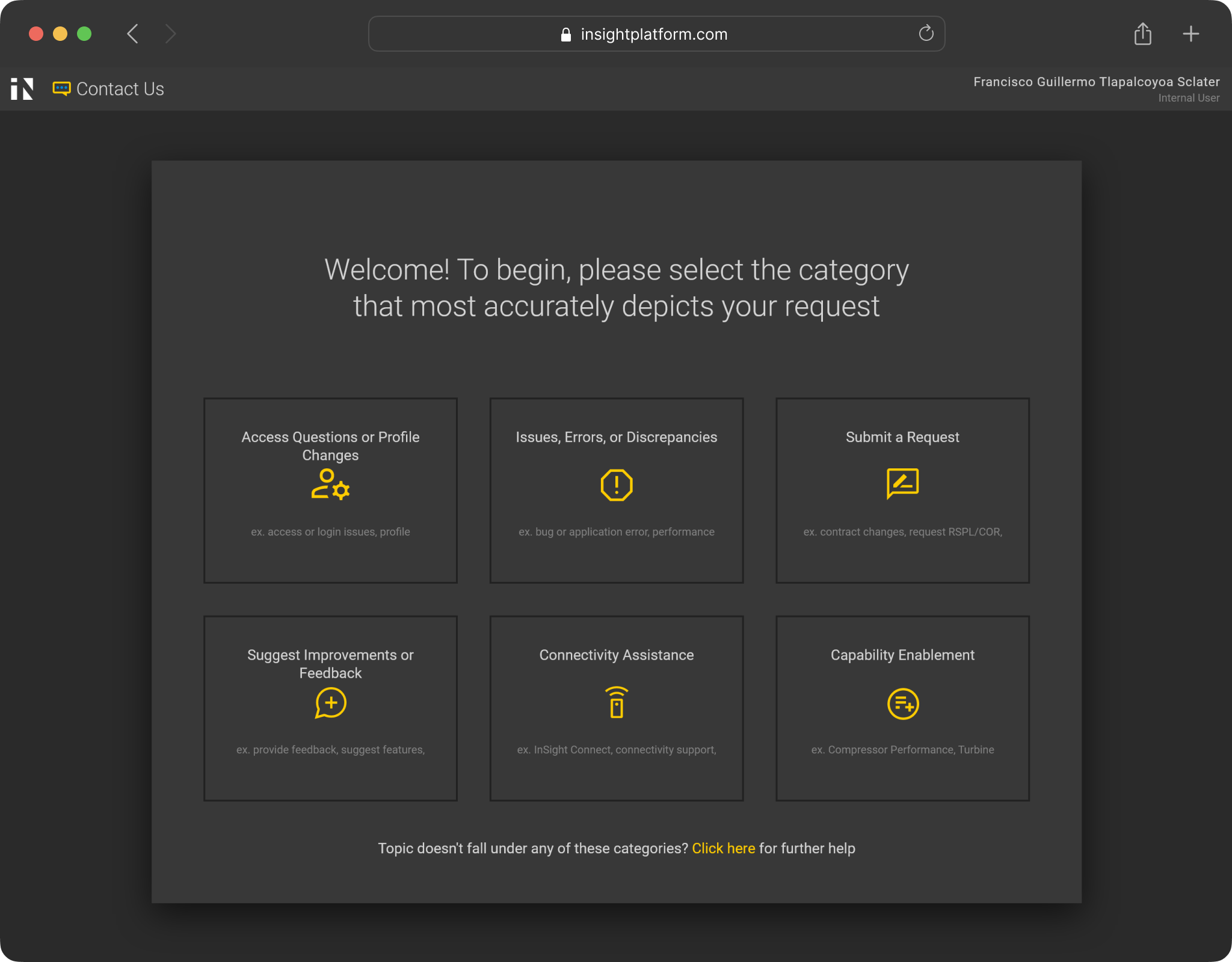

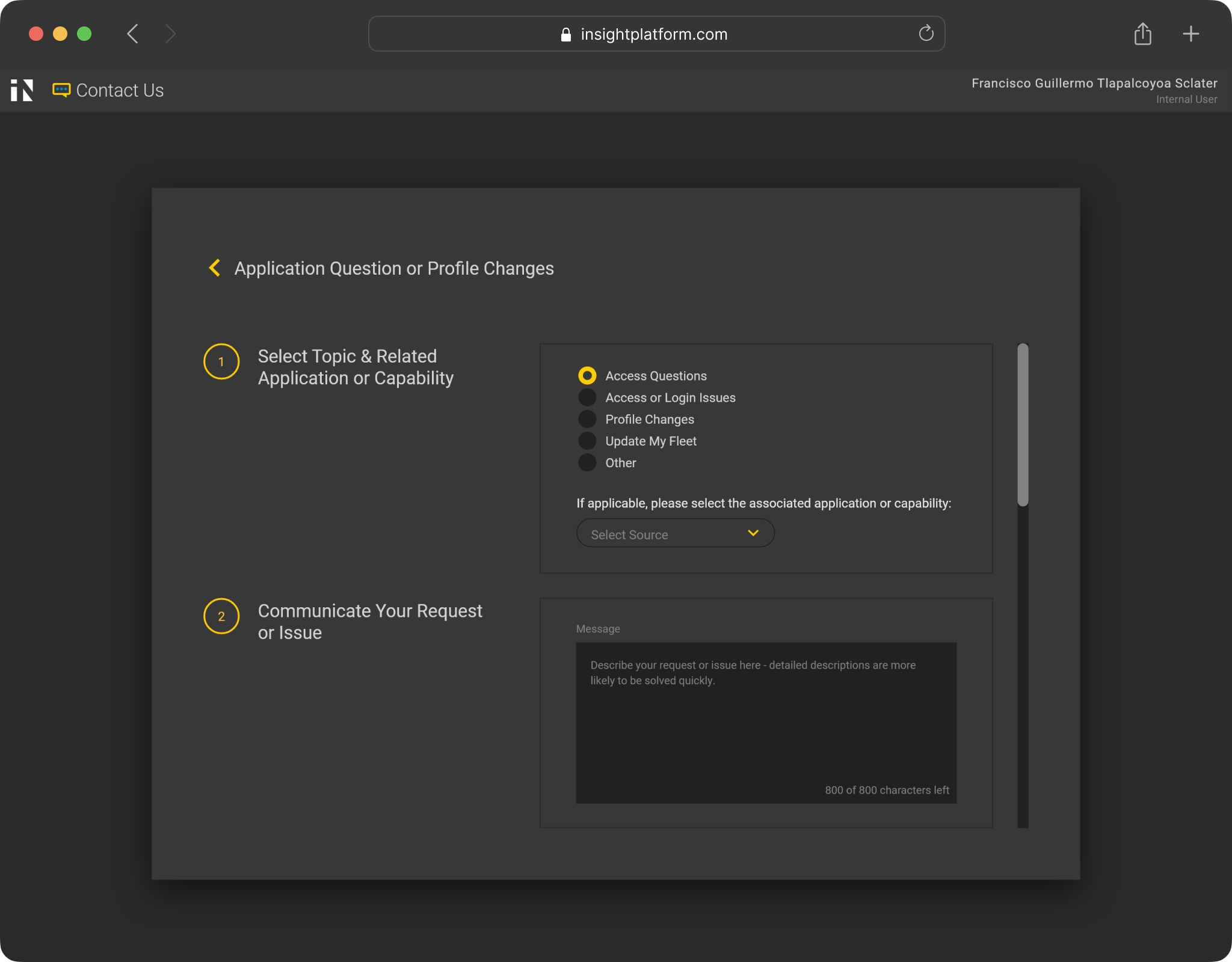

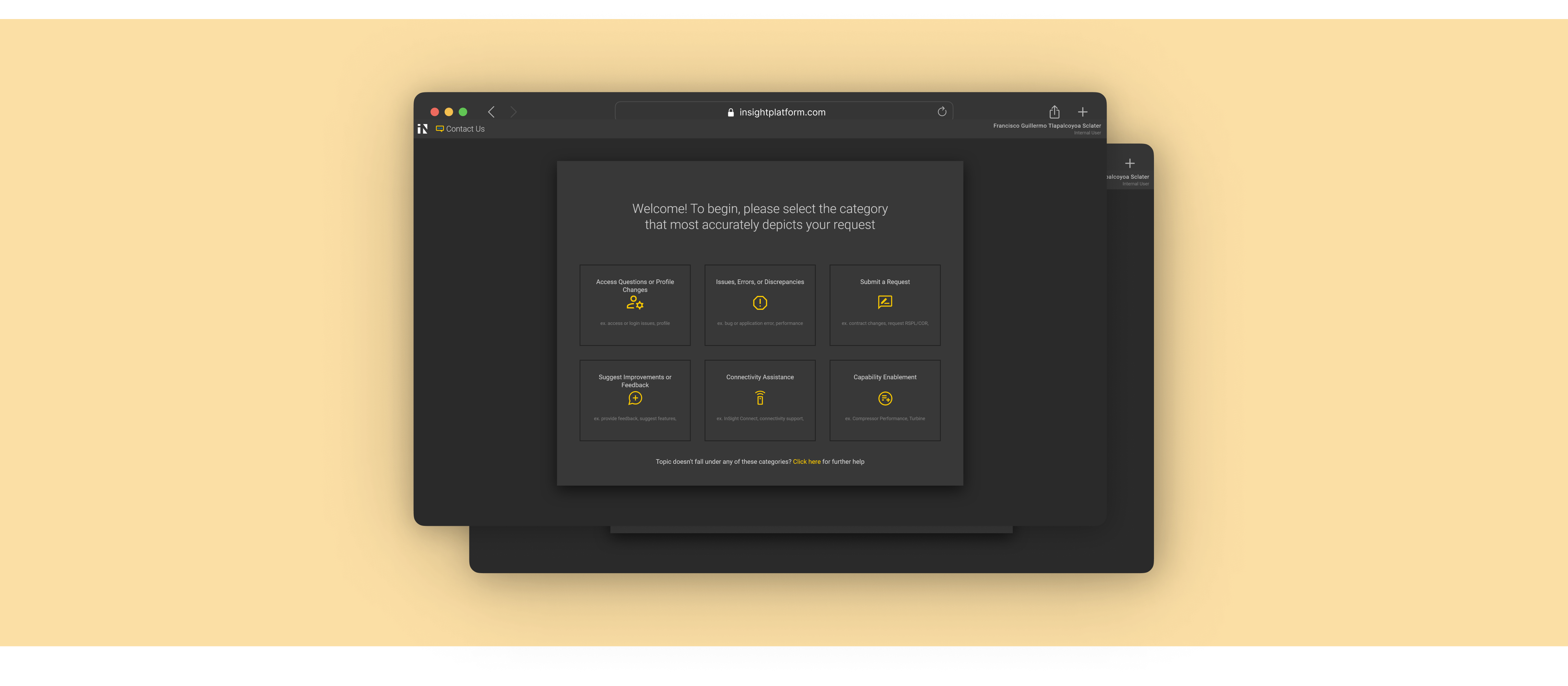

InSight is Solar Turbines’ SaaS platform for remote monitoring of industrial gas turbines. The Contact Us form has not evolved to reflect the software’s new features and developments over the years, resulting in low submission quality and slower ticket resolutions.

This increase in resolution time directly results in internal employees’ (Subject-Matter Experts, Product Managers, etc. ) reduction of bandwidth for other productive work, as well as potential revenue loss for customers facing critical issues leading to turbine downtime.

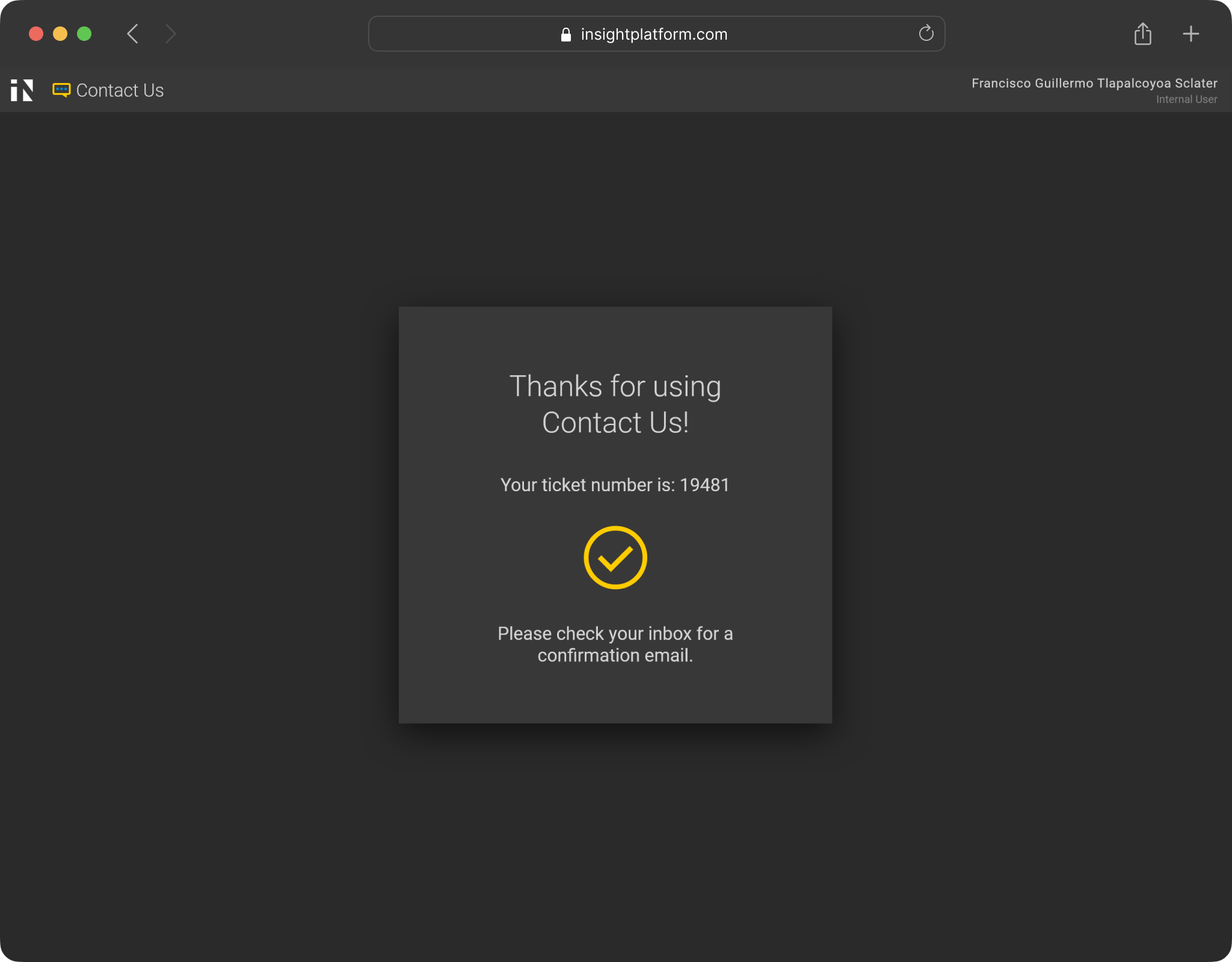

Consequently, I was tasked to redesign the contact us form, which was identified as a bottleneck within the help request system.

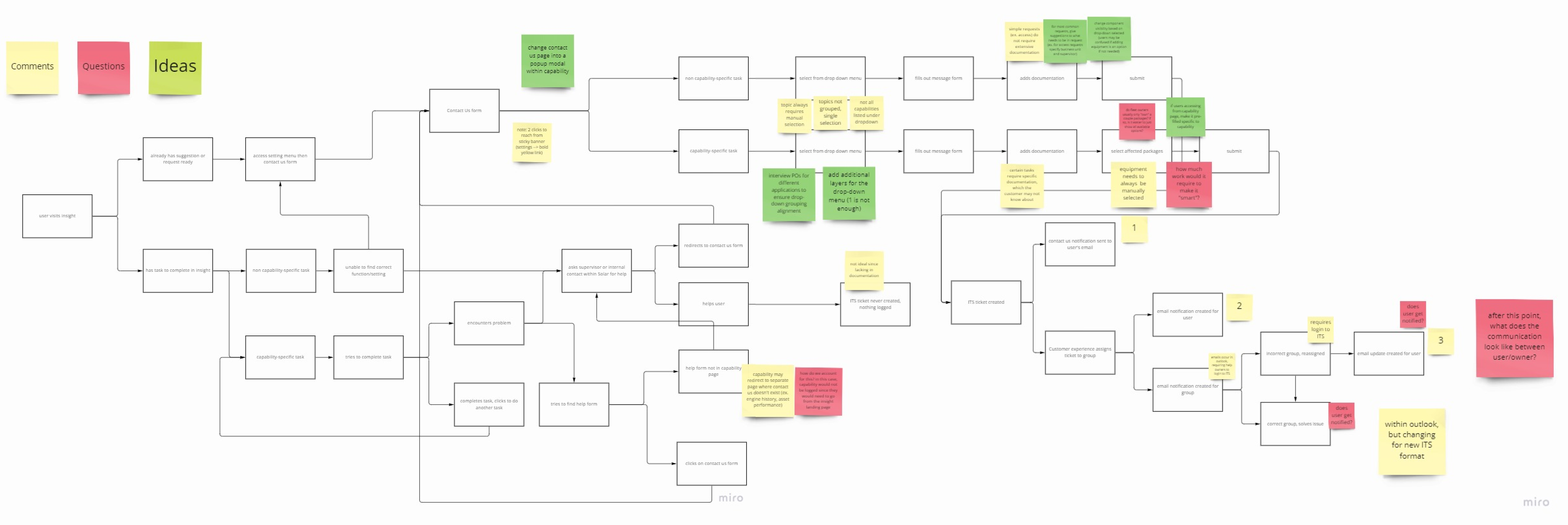

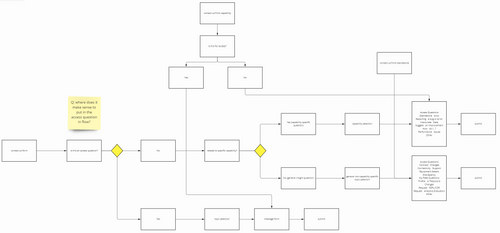

User Flows

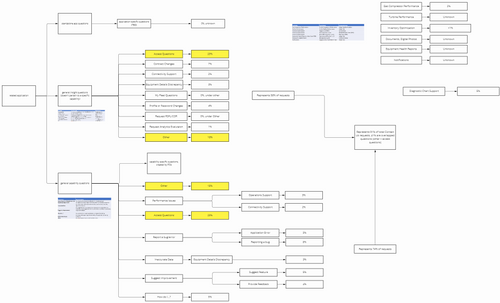

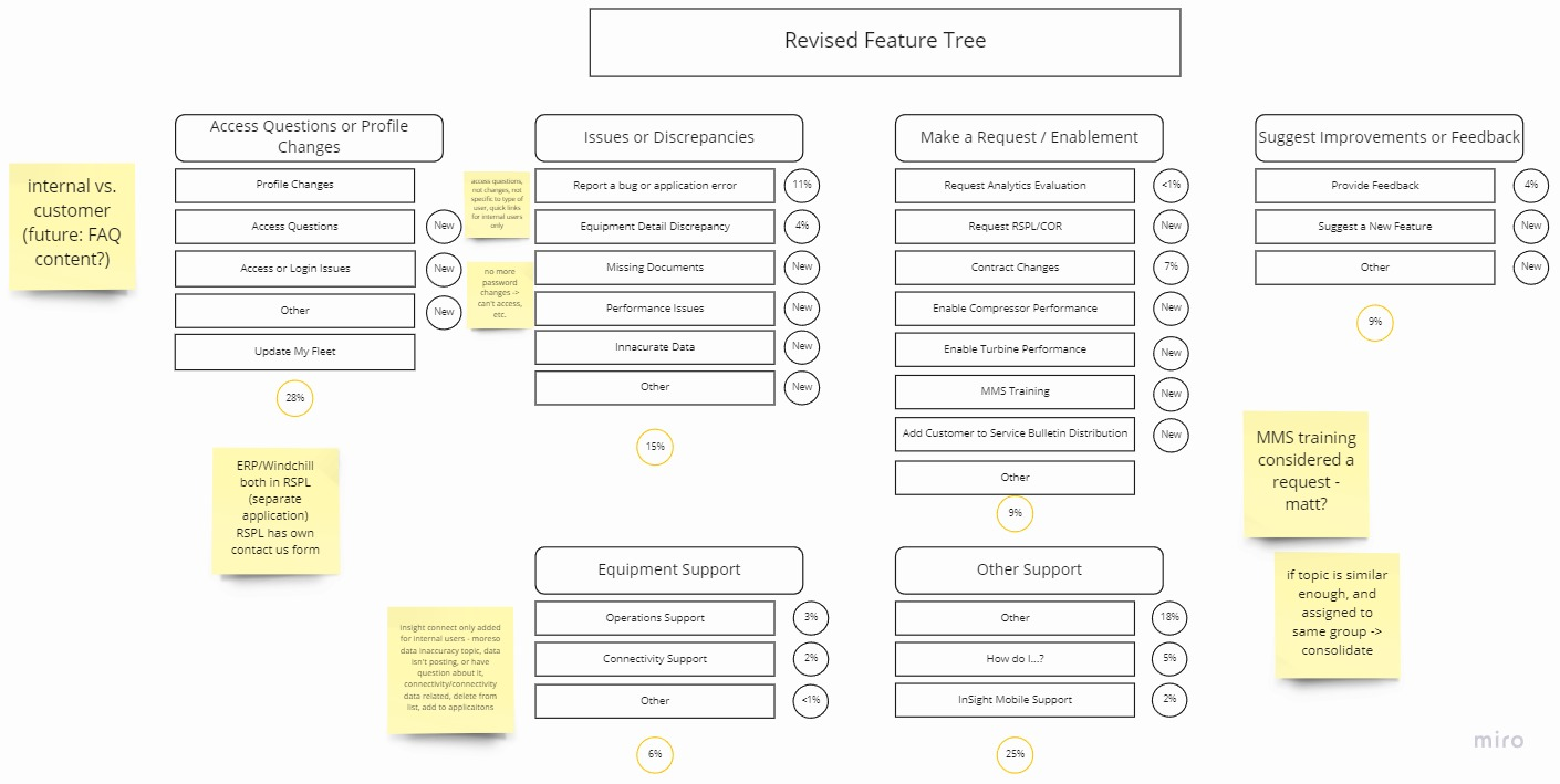

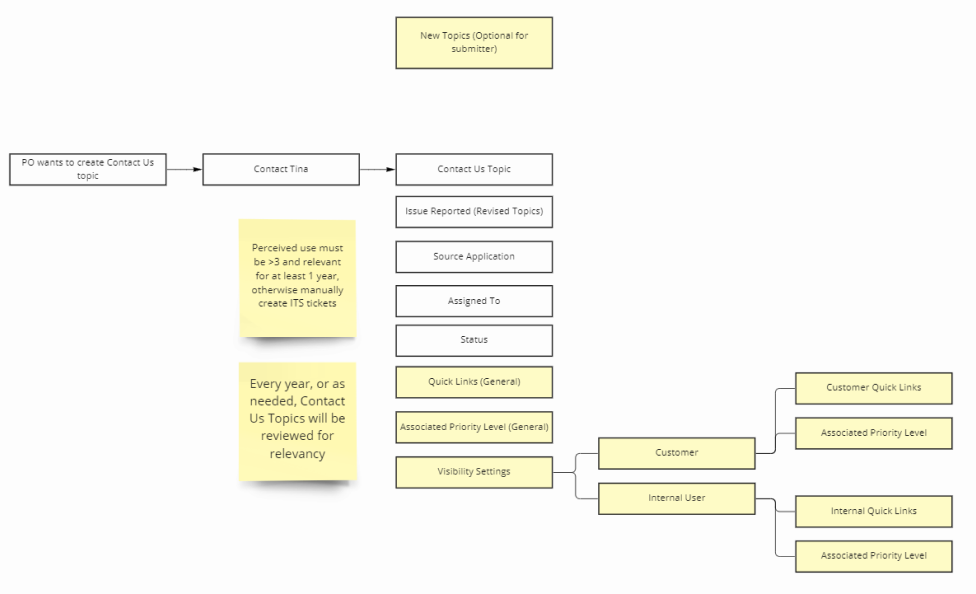

From interviewing several stakeholders and mapping out a comprehensive user flow, I realized that the Contact Us form served as the critical entry point for the entire help process - if not done well or thoroughly, the rest of the process is deeply affected.

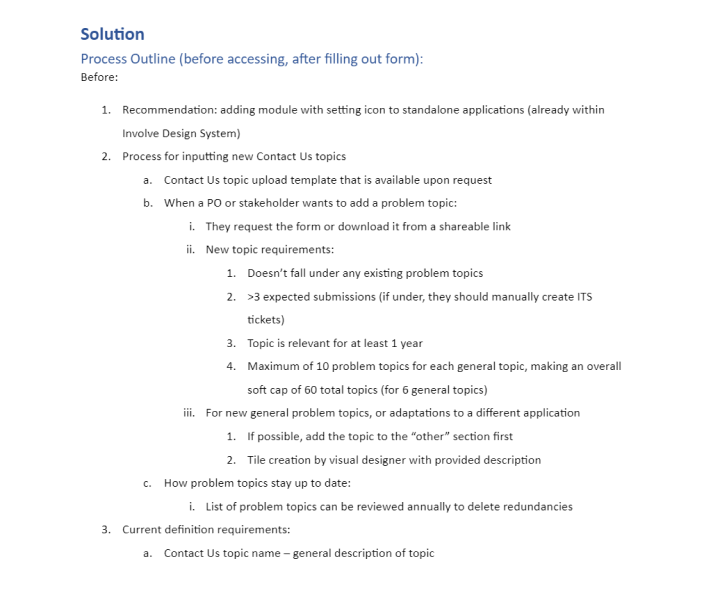

Another insight for the redesign was to prioritize flexibility and adaptability for future iterations, since topics and features were constantly evolving over time and would greatly benefit from a consistent structure.